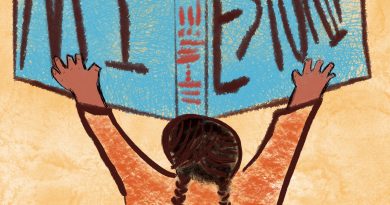

Who controls what we see?

If the internet is the collection of human knowledge, we as a species have never failed so spectacularly.

Our phones are powerful tools, these black mirrors relay a lot of information to us. This influence is used by content creators, whether its ads, posts or memes. All this content is used to seed a thought, and a thought is all that is needed to get you to think.

Have you ever seen that ad for something you thought about but never expressed interest for? Advertisers use algorithms to create personalized ads that know more about ourselves than we do.

These are the minds of robots at work. A program is nothing more than a collection of instructions written by a person and translated for a computer to understand.

So next time Netflix recommends a show, Twitter shows you an ad for a certain product, or Amazon encourages you to buy similar items other people have purchased, it is all an AI algorithm program learning about you and others based on your interactions on those sites and other websites.

The robot revolution won’t happen in one day, it’s been happening. These AI algorithms can already spot patterns in human behavior, for example, the mess that was Cambridge Analytica (CA).

The Trump campaign contracted CA to collect voter data from Facebook. Facebook user data was used to create profiles of voters who were undecided to better target them with advertisements meant to change how those individuals vote.

The worst part was that this data was not stolen, it was given to CA by Facebook. CA took advantage of Facebook’s lax privacy code of conduct to gather data from 270,000 users who gave a quiz application permission to do so. CA then scrapped those users’ contacts and used that to gather friends and families Facebook data, resulting in a dataset of over 87 million users, according to the Guardian.

Technology is being used to manipulate voter behavior, and this is not even the worst thing Facebook has been used for.

In 2016, trolls, that were later discovered to have originated from Myanmar military officials, were used to spread hate about the Rohingya populace, according to a UN investigative report. It led to a genocide that is still currently displacing millions of Muslim Rohingya from Myanmar, according to a Reuters report.

A similar incident occurred in the U.S. with Russian trolls meddling in the 2016 election. According to the “Report on The Investigation into Russian Interference in the 2016 Presidential Election” by Special Counsel Robert Mueller, Russia used Facebook advertisements and Facebook groups to target voters in the U.S. to create political unrest and polarization.

Should we fear the suggestions AI make for us? Or should we fear the people who manipulate these algorithms to further their own agenda?

If we wish to be the ones in control of the content we view, we must seek it on our own prerogative.